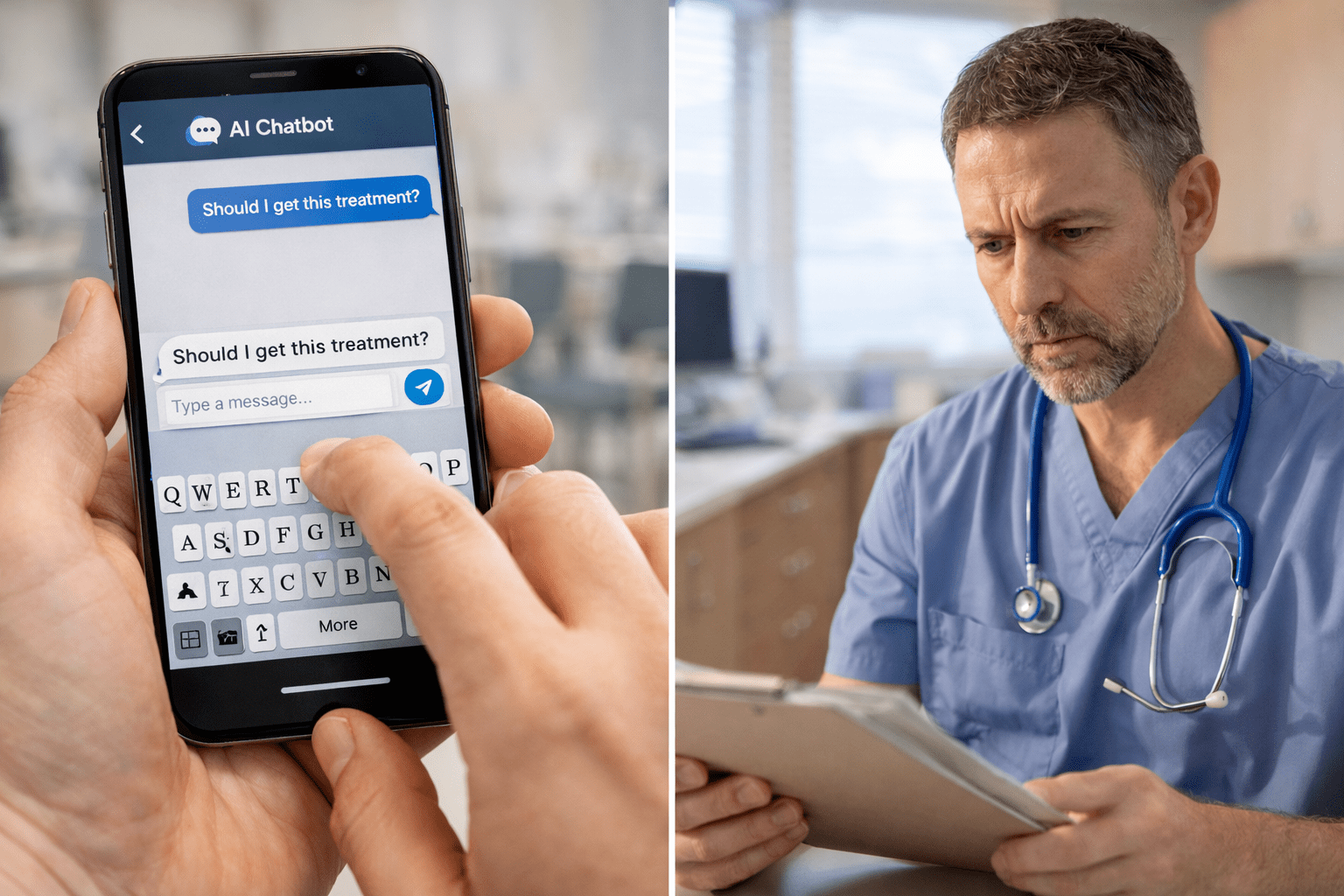

Half of AI Health Advice Is Wrong—And Patients Have No Idea

As doctors begin their next appointment, there is a chance that their new patient has probably consulted an AI chatbot before calling you. They asked about symptoms. Treatment options. Whether they really need to see a specialist or if there’s a “natural” alternative.

And there’s a 50% chance the chatbot gave them bad information—delivered with the confidence of a tenured professor.

A February 2025 study published in BMJ Open tested five popular AI chatbots—ChatGPT, Gemini, Meta AI, DeepSeek, and Grok—with 250 health and medical questions across cancer, vaccines, stem cells, nutrition, and athletic performance.

Half the responses were problematic. Thirty percent were “somewhat” problematic. Twenty percent were “highly” problematic—meaning they could plausibly direct users toward ineffective treatment or actual harm.

Grok performed worst, generating highly problematic responses 58% of the time. Gemini performed best—but “best” still means a significant percentage of answers were flawed.

The kicker? Every answer was delivered with certainty. No hedging. No disclaimers. Just authoritative-sounding nonsense dressed up as medical guidance.

The Problem Isn’t Just Accuracy—It’s Authority

AI chatbots don’t reason. They don’t weigh evidence. They predict word sequences based on statistical patterns in their training data.

That training data includes Q&A forums, social media, and only 30–50% of published scientific studies, because most research is behind paywalls.

So when a patient asks, “Should I try stem cell therapy for my knee pain?” the chatbot doesn’t consult peer-reviewed orthopedic literature. It synthesizes Reddit threads, wellness blogs, and whatever open-access papers happened to be in its dataset.

Then it presents the answer as if it just consulted the Mayo Clinic.

The study also found that chatbot references were fabricated or incomplete 60% of the time. Hallucinated citations. Made-up journal articles. Links that go nowhere.

And the readability? College-level complexity across the board—meaning the average patient is getting information they can’t fully understand, from sources that don’t exist, delivered with unearned confidence.

What This Means for Your Practice

Your patients are arriving pre-educated—or pre-misinformed.

They’ve already decided what they believe about vaccines, cancer treatments, or whether they need surgery. They’re not coming to you for information. They’re coming for confirmation.

And if your messaging doesn’t address the AI chatbot’s medical misinformation they’ve already absorbed, you’re starting the conversation three steps behind.

This isn’t a patient education problem. It’s a trust architecture problem.

Because the gap between what patients believe and what your marketing communicates is where they disengage.

If your website says, “We offer advanced care,” but they’ve read (via ChatGPT) that “advanced care” is code for unnecessary procedures, they won’t book an appointment. They’re moving on to the next practice that speaks their language.

The Belief-First Solution

Most practices respond to AI chatbot medical misinformation with more information.

More blog posts. More FAQs. More “myth vs. fact” content that nobody reads.

But information doesn’t change beliefs. Evidence does.

Your marketing needs to start with one question: What do you want patients to believe about your practice?

That you’re the most transparent?

The most evidence-based?

The most willing to explain what other practices won’t?

Once that belief is clear, every piece of content—your ads, your website, your intake forms—exists to prove it.

Not by saying it. By showing it.

If patients arrive believing “all specialists just want to do surgery,” your messaging can’t be defensive. It has to be specific. “Here’s how we decide when surgery is—and isn’t—the right call. Here’s the data. Here’s the alternative. Here’s why we’re telling you this before you even sit down.”

That’s not content. That’s evidence.

The Accountability Factor

AI chatbots don’t have accountability. They don’t have reputations. They don’t live in your market.

You do.

And that’s the advantage most practices aren’t using.

When a patient gets bad advice from ChatGPT, there’s no recourse. No follow-up. No correction. Just another search query.

When they get advice from you—and you’re local, accountable, and willing to explain your reasoning—that’s a different transaction.

The practices that win in this environment aren’t the ones with the most content. They’re the ones with the clearest belief system and the most consistent proof.

What Happens Next

AI chatbots aren’t going away. Neither is the misinformation they generate.

The study authors called for public education, professional training, and regulatory oversight.

Fine. But while we’re waiting for that, your patients are still asking Grok whether they need a root canal.

The question isn’t whether AI chatbot medical misinformation exists. The question is whether your marketing is designed to counter it—or whether you’re pretending it’s not happening.

Because half of what your patients think they know is wrong. And they learned it from a chatbot that sounded smarter than you.

Source: Life Sciences News