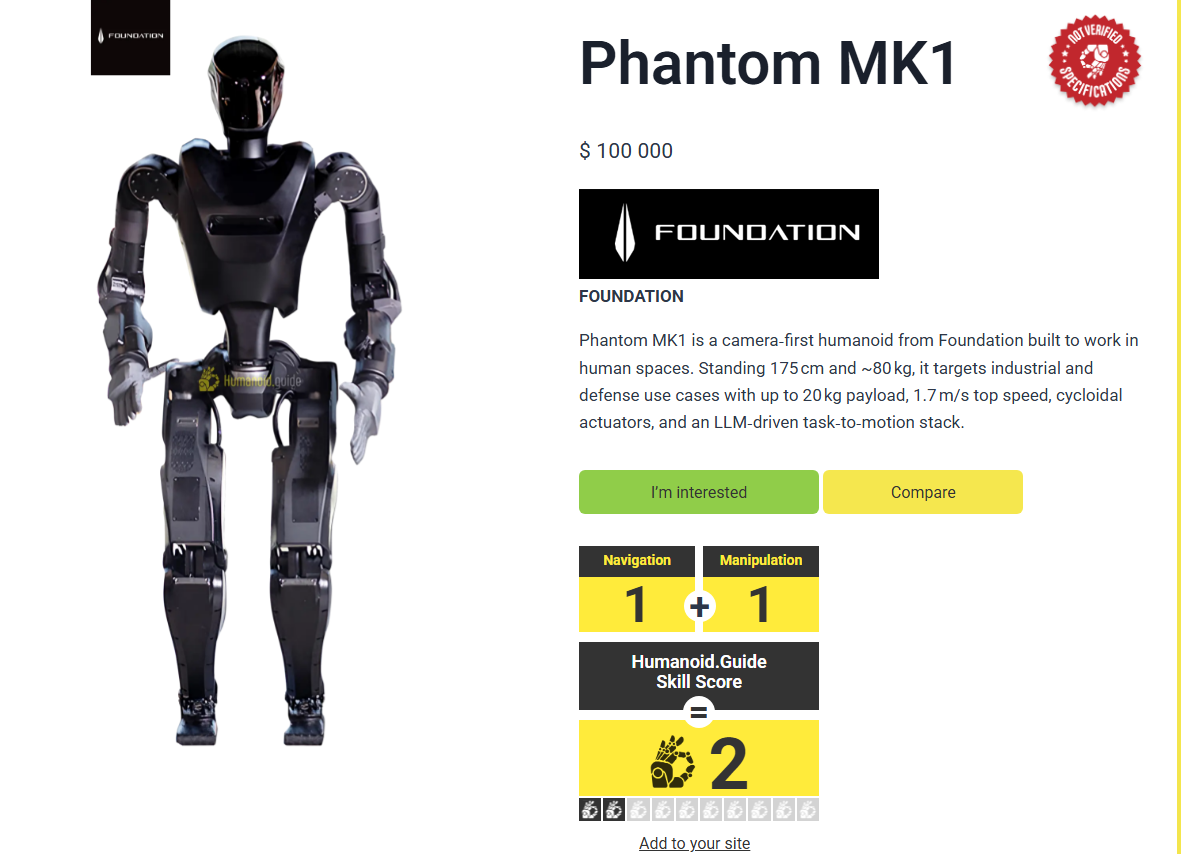

The Phantom MK-1 looks exactly like what you’d expect from a combat robot designed by a 14-year Marine Corps veteran with multiple tours in Iraq and Afghanistan. (the image above is not the Phantom MK-1

Jet black steel. Tinted glass visor. Gripping a shotgun, pistol, revolver, and M-16 replica.

Not exactly the friendly warehouse assistant vibe most humanoid robots are going for.

Foundation — the San Francisco startup behind Phantom — has already secured $24 million in combined research contracts with the U.S. Army, Navy, and Air Force. Two Phantoms were shipped to Ukraine in February for frontline reconnaissance. Marine Corps “methods of entry” training starts soon, teaching the robots to blow doors open so human soldiers don’t have to.

“We think there’s a moral imperative to put these robots into war instead of soldiers,” says Mike LeBlanc, the Foundation’s co-founder.

Moral imperative. That’s the pitch.

The Slippery Slope From “Human-in-the-Loop” to Full Autonomy

Foundation insists Phantom will always require human authorization before engaging targets — matching current Pentagon protocols that demand a human green light before automated systems can fire.

Except AI-powered drones in Ukraine are already assessing targets and autonomously firing because Russian radio jamming renders remote operation ineffective. When your adversary decides autonomous operation is acceptable, what stops the U.S. from reciprocating in the fog of war?

“It’s a slippery slope,” says Jennifer Kavanagh, director of military analysis at Defense Priorities. “The appeal of automating things and having humans out of the loop is extremely high.”

Then there’s the Trump Administration’s recent move: ordering federal agencies and military contractors to cease business with Anthropic — the AI firm known as the most safety-conscious of the big players. Anthropic’s contract specifically prohibited its technology from surveilling American citizens or programming autonomous weapons to kill without human involvement.

The White House refused to be bound by those restrictions.

Ukraine Is Already a Robot War

When LeBlanc took Phantom to Ukraine, what he discovered was “really shocking” — a complete robot war in which robots are the primary fighters and humans provide support. Ukraine now launches up to 9,000 drones every day. AI-enhanced quadcopters can attack Russian soldiers without human intervention when communications fail. Computer vision can identify and eliminate specific targets, even flying through windows to assassinate individuals.

In late January, three bloodied Russian soldiers emerged from a routed building to surrender to an armed Ukrainian ground robot — a kind of small, unmanned tank.

The Economics of Droid Battles

LeBlanc argues that humanoid soldiers are a natural extension of existing autonomous systems, such as drones. Compared with risking the lives of teenage grunts — with all the political backlash, risks of stress-induced war crimes, and trauma — humanoid soldiers offer a more resilient alternative with greater restraint and precision.

Robots don’t suffer from fatigue or fear. They can operate continuously in extreme conditions while immune to radiation, chemicals, or biological agents.

And eventually, LeBlanc believes, giant armies of humanoid robots will nullify each side’s tactical advantage much like nuclear deterrents — exponentially decreasing escalation risks.

“Droid battles, with a bunch of drones overhead and humanoids walking out towards each other, become an economic conflict,” he says.

Except if the ability to wage war remotely and autonomously leads to minimal human toll, that in itself may increase risk tolerance — meaning more operations with higher escalation potential. If a nation can wage war without the political cost of bringing home flag-draped coffins, will it be more likely to engage in unnecessary conflicts?

“The human cost of war sometimes keeps us out of war,” says Kavanagh.

The Accountability Problem

If a humanoid robot malfunctions and commits a war crime or kills a noncombatant, who’s held responsible? The software programmer? The commanding officer? Current international law isn’t equipped to address “algorithmic accountability,” leaving a legal vacuum in the face of tragedy.

Then add in the well-documented algorithmic biases that blight AI facial-recognition software. Set against a drastic militarization of American society — heavily armed ICE officers swarming U.S. cities, the National Guard deployed to six states last year, local police equipped with armored vehicles left over from the Forever Wars — the specter of AI-powered soldiers with opaque mission directives has civil-liberty alarm bells clanging.

“The plethora of legal, ethical, and accountability concerns outweigh any potential benefits,” says Bonnie Docherty, a lecturer at Harvard Law School’s International Human Rights Clinic.

The Arms Race Is Already Happening

The Phantom MK-2 is due in April with numerous upgrades — consolidated electronics that reduce short-circuit risk, waterproofing, larger battery packs, and the ability to carry 175-pound loads. The aim is to eventually build 30,000 a year. Once production hits half a million units, each will probably cost less than $20,000.

Co-founder Sankaet Pathak eventually envisions thousands-strong swarms of Phantoms conducting complex military operations.

Authoritarian regimes, including Russia and China, are developing dual-use technology, pitting the West in a contest to create ever more powerful and efficient killing machines in human form.

“World war is bad,” says Pathak, “but a cold war is genuinely a good thing, because it forces everybody to innovate at a very fast pace. We want China to have humanoid robots, we want America to have humanoid robots, everybody to have humanoid robots.”

U.N. Secretary-General António Guterres and the International Committee of the Red Cross have jointly called for a legally binding treaty prohibiting autonomous systems that function without “meaningful human control” by year’s end. Over 120 nations support this measure.

Major military powers like the U.S., Russia, and Israel are dragging their heels.

“Right now, what you’re seeing is the first flatfooted and clumsy attempt at how robots are going to fight our wars,” says LeBlanc. “But they’re really waiting for the start of the show.”

Source: Time